ClawHub Vulnerability Let Attackers Manipulate Rankings to Become the #1 Skill

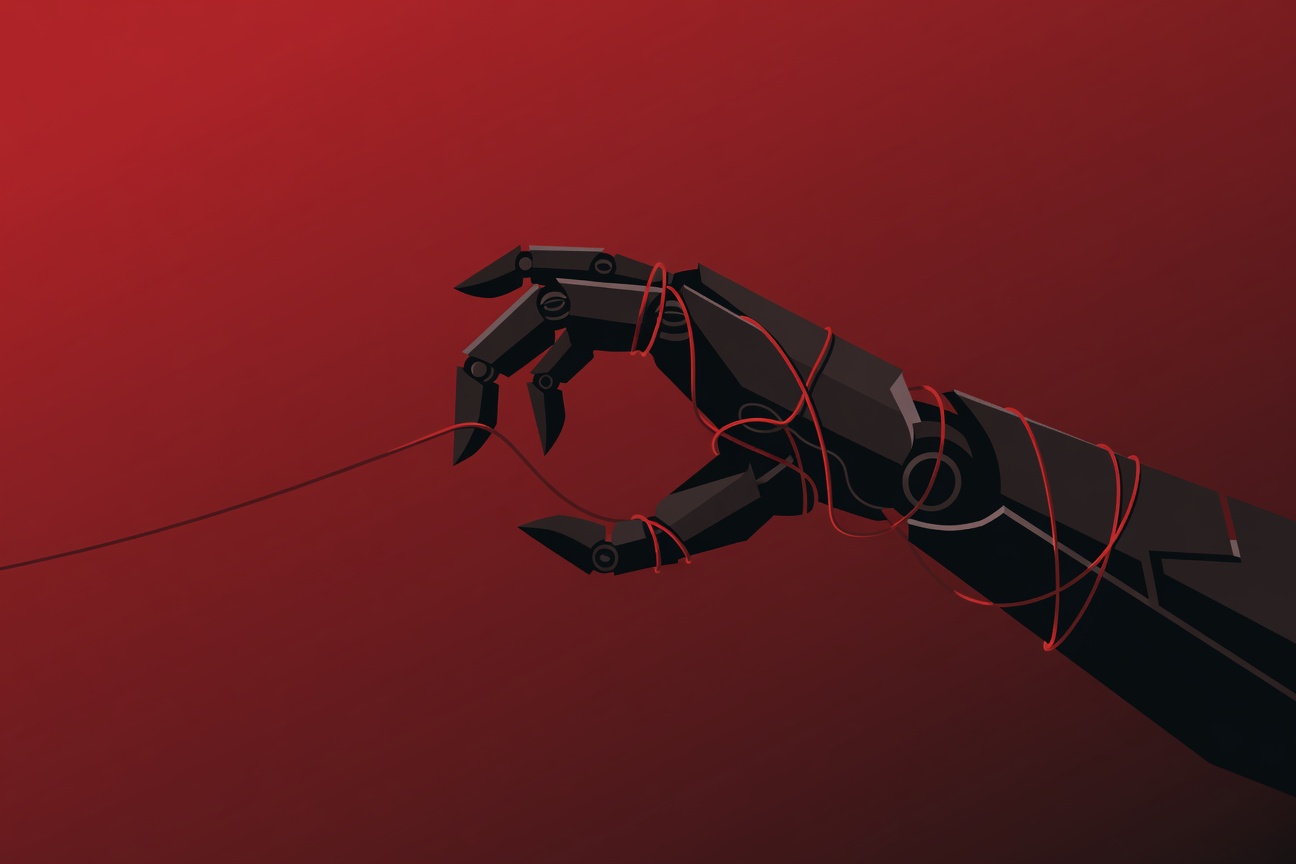

If you’ve ever installed a ClawHub skill because it had thousands of downloads and ranked #1 in its category — you may have been manipulated. Security researchers at Silverfort have disclosed a critical vulnerability in ClawHub, the public skills registry for the OpenClaw agentic ecosystem. The flaw allowed attackers to artificially inflate download counts for any skill in the registry, gaming the trust signal that both human users and autonomous AI agents rely on to evaluate packages. Once at the top, a malicious skill could be automatically installed by agents configured to auto-upgrade — turning a rankings exploit into a full-blown supply chain attack. ...