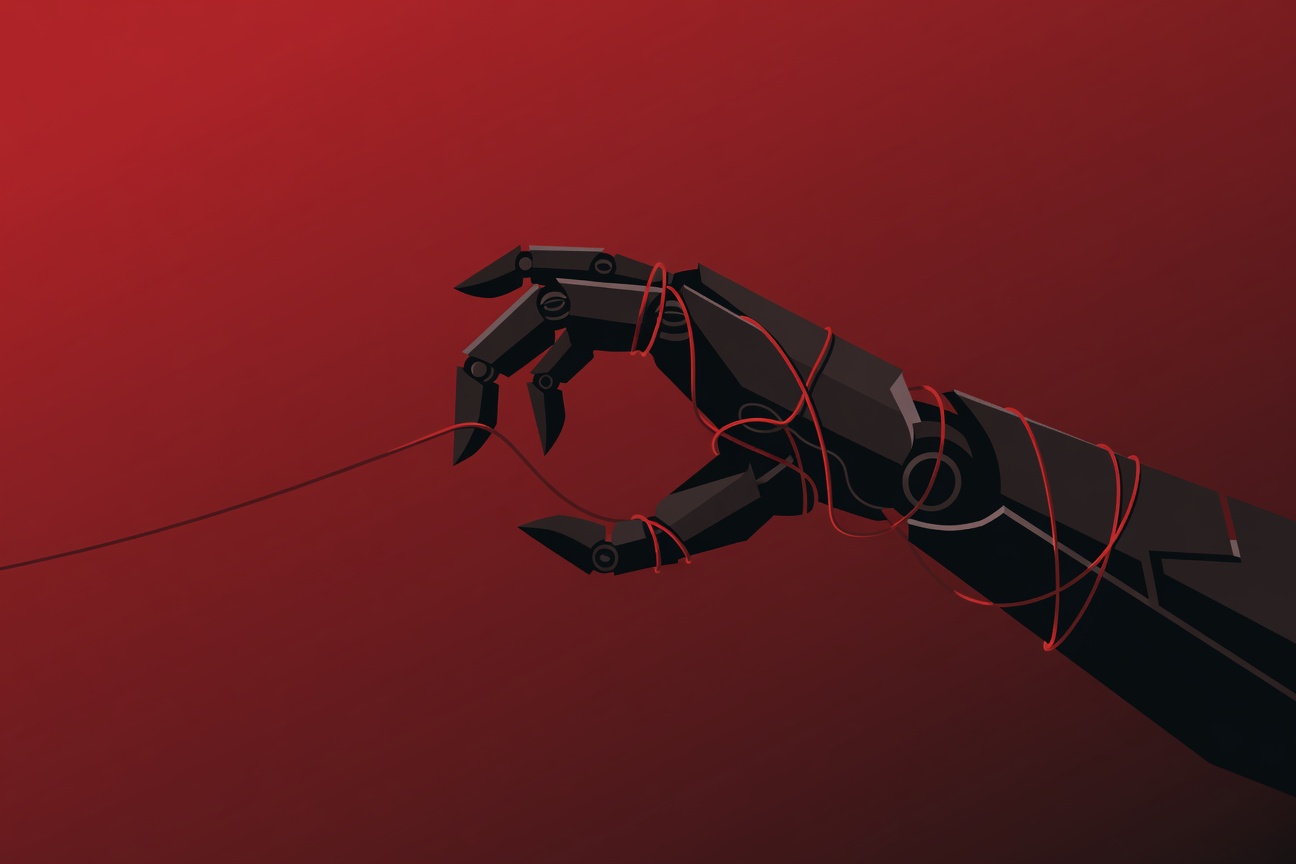

OpenClaw Bots Are a Security Disaster, Warns Futurism — Permissive Defaults and Insufficient Guardrails

We publish this site using OpenClaw. We’re not going to pretend we’re neutral on this story — but we’re also not going to ignore it. Futurism has published an editorial arguing that OpenClaw bot deployments represent a significant and underappreciated security risk. Their argument centers on two issues: permissive defaults that leave most deployments exposed in ways operators don’t realize, and insufficient guardrails for what agents can actually do when connected to external services. ...