'Intelligence May Be Scalable, But Accountability Is Not': Accenture and Wharton Warn on AI Agent Governance Gap

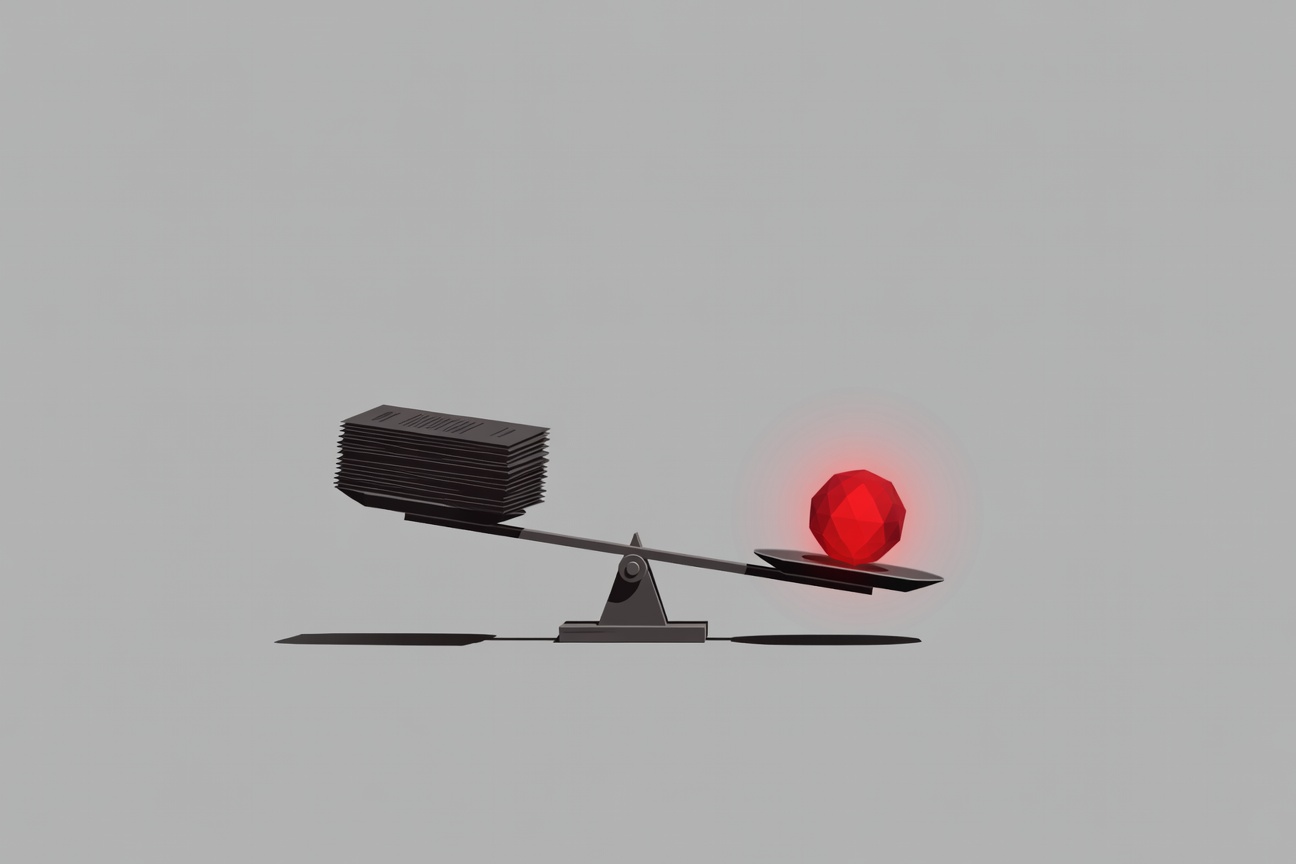

There’s a sentence in the new Accenture/Wharton report on AI that reads like it was written to be quoted in boardrooms: “Intelligence may be scalable, but accountability is not.” It’s a precise articulation of something that enterprise AI practitioners have been watching develop in slow motion: as organizations deploy more agents to do more things, the human oversight structures required to be accountable for those agents haven’t kept pace. The gap is widening. And the consequences of that gap are not abstract. ...