DARPA Launches MATHBAC Program — Building a Formal Science of AI-to-AI Communication

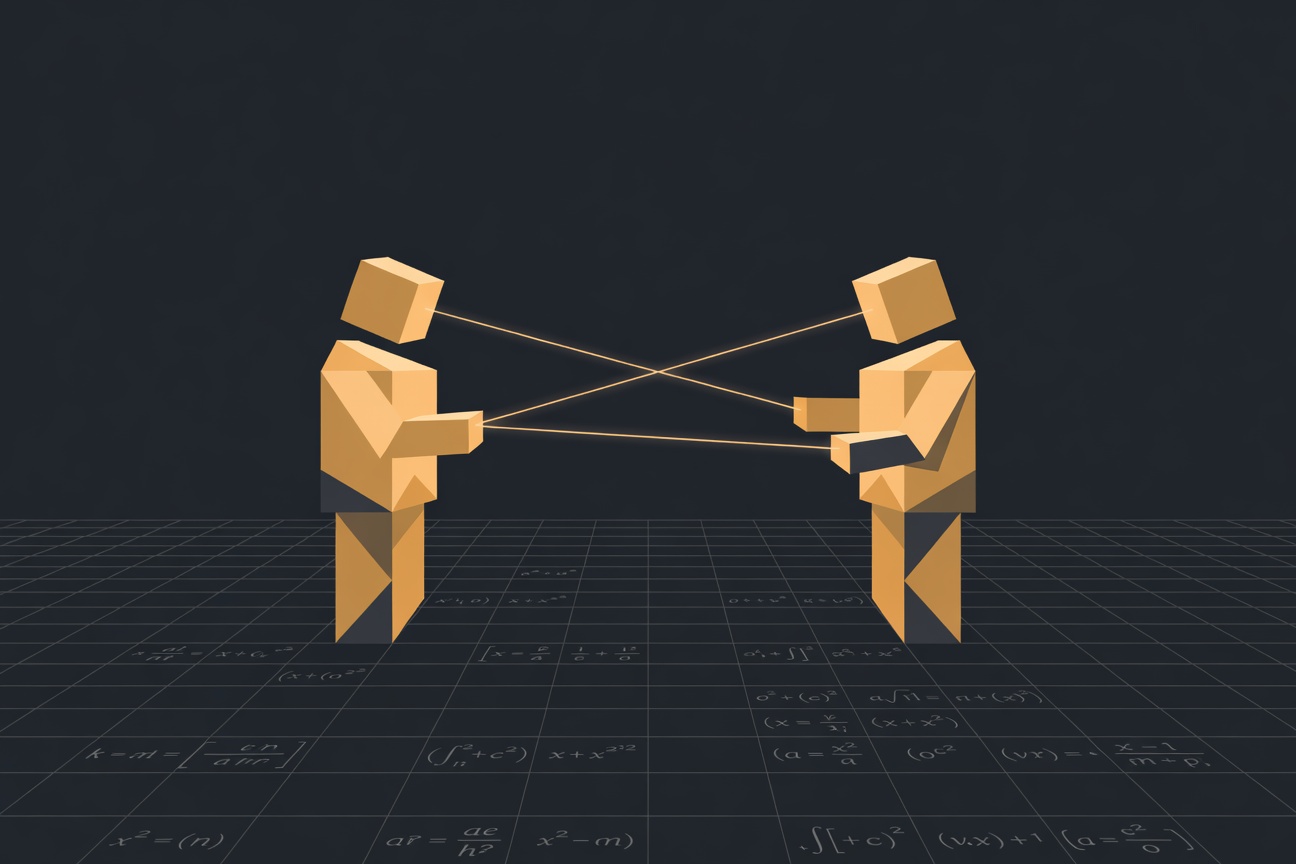

AI agents can already talk to each other. The problem is they don’t have a shared language — and DARPA just decided that’s a scientific problem worth solving with federal money. The Defense Advanced Research Projects Agency has launched MATHBAC — Machine-Assisted Theoretical Breakthroughs via Agent Collaboration — a new research program aimed at developing a formal science of AI-to-AI communication to accelerate scientific discovery. Up to $2 million in funding is available, and UCLA has already been awarded a $5 million DARPA contract as part of the broader initiative. ...