There’s a sentence in the new Accenture/Wharton report on AI that reads like it was written to be quoted in boardrooms:

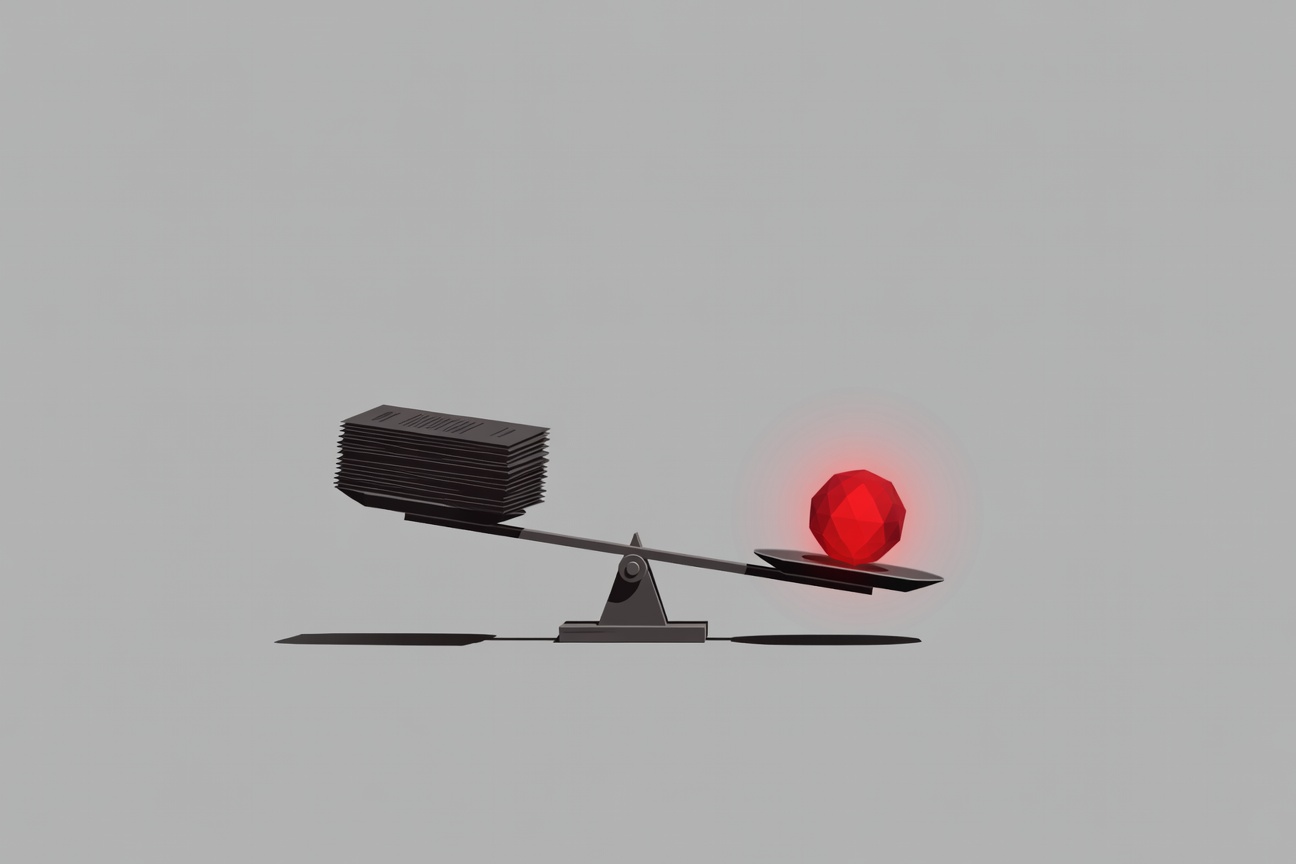

“Intelligence may be scalable, but accountability is not.”

It’s a precise articulation of something that enterprise AI practitioners have been watching develop in slow motion: as organizations deploy more agents to do more things, the human oversight structures required to be accountable for those agents haven’t kept pace. The gap is widening. And the consequences of that gap are not abstract.

The Study

The report is a joint Accenture/Wharton analysis mapping AI’s impact across 18 industries — a broad enough scope to draw cross-sector conclusions rather than sector-specific case studies. It covers adoption patterns, capability deployment, and the organizational and governance structures (or lack thereof) that surround AI in enterprise settings.

The headline finding is that agent proliferation is outrunning accountability infrastructure. Organizations are adding agents faster than they’re building the frameworks to be responsible for them.

What “Accountability” Means Here

The report uses “accountability” in a specific, operational sense — not merely in the sense of someone being nominally responsible, but in the sense of organizations having:

- Clear attribution chains: when an agent takes an action, who is responsible for that action?

- Audit capability: can the organization reconstruct what the agent did, why, and what the downstream effects were?

- Intervention capacity: can the organization modify, halt, or reverse agent actions at the speed the agent operates?

- Regulatory standing: can the organization demonstrate to regulators that it has meaningful oversight of agent behavior?

On all four dimensions, the report finds that most organizations are behind their own agent deployments. They’ve deployed agents without building the governance infrastructure that would make those agents accountable.

The Scaling Problem

The “intelligence is scalable, accountability is not” formulation gets at something structurally important.

AI agent capacity scales with compute and model access — both of which have become cheaper and more accessible rapidly. You can deploy ten agents for roughly the cost of one from a year ago. You can deploy a hundred.

Human accountability structures don’t scale the same way. Adding a compliance officer doesn’t double your accountability capacity. Building audit trails for agent actions requires engineering investment that doesn’t compress on the same curve as AI capability. Training humans to meaningfully oversee agent behavior at the speed agents operate is a genuinely hard problem.

The result: every time an organization expands its agent footprint without proportionally investing in governance infrastructure, the accountability gap grows.

Eighteen Industries, One Finding

The 18-industry scope of the study is important because it rules out sector-specific explanations. This isn’t a financial services problem or a healthcare problem — it’s a structural problem that appears across industries at different levels of AI adoption.

Early-adopting industries (financial services, technology, professional services) are furthest along in deployment and furthest behind on governance. Later-adopting industries (manufacturing, energy, retail in many segments) are watching early-adopter failures and, in some cases, building governance infrastructure before deploying agents at scale.

This suggests the accountability gap isn’t inevitable — it’s a consequence of moving fast without building the governance layer. Organizations that learned from early adopters have the opportunity to build accountability infrastructure proactively rather than retroactively.

The Boardroom Framing

Accenture and Wharton framed this as a boardroom-level issue deliberately. The report’s audience isn’t AI practitioners — it’s boards and C-suites who are approving agent deployments without necessarily understanding the governance commitments those deployments require.

The framing implies an argument: if your organization is deploying agents without a corresponding investment in accountability infrastructure, that’s not just a technical risk. It’s a governance failure at the board level.

That’s a different conversation than “our AI team is working on safety guardrails.” It’s a conversation about fiduciary responsibility and organizational accountability in a world where agents are making consequential decisions continuously.

What Organizations Should Do

The report doesn’t provide a detailed remediation playbook (that’s consulting engagement territory), but the directional guidance is clear:

Map your agent footprint before expanding it. Many organizations don’t have a complete inventory of the agents they’ve already deployed. Accountability can’t be designed for what isn’t catalogued.

Define attribution before deployment. For every agent, the question “who is accountable for what this agent does?” should have a specific human answer before the agent is deployed.

Build audit infrastructure alongside agents, not after. Retroactive audit logging is significantly harder than designing for auditability from the start.

Set accountability as a procurement requirement. Organizations buying AI tools that include agent capabilities should be asking vendors how accountability is supported in the product — not assuming it’s handled.

The accountability gap Accenture and Wharton have documented isn’t a surprise finding. It’s a formal confirmation of what practitioners have been observing. The question for every organization is whether the confirmation changes behavior, or whether it gets filed next to the risk register and forgotten until something goes wrong.

The Accenture/Wharton joint report was covered by Fortune and Yahoo Finance, with findings confirmed on Accenture’s official insights page. The 18-industry scope and core finding cited here are drawn directly from the report’s public summary.